How Courts Are Treating AI Chat Logs in Criminal Cases

It is 2:30 in the afternoon and you just left the police station. The detective's questions keep replaying in your mind. You are scared, confused, and looking for answers. So you open ChatGPT on your phone and start typing. You describe what happened, what the police asked, what you said, and what you left out. You ask if you did the right thing. The response comes instantly. It feels confidential, like a conversation with a priest or a therapist.

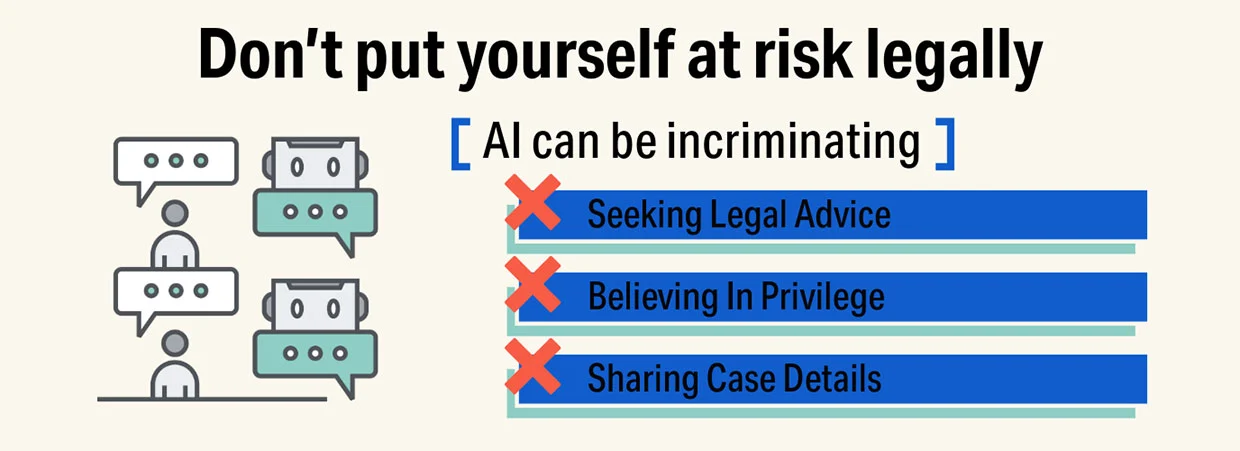

But here is what you need to understand right now: that conversation is not privileged. It is not confidential. And it can be used against you in court.

Every word you type into an AI chatbot becomes a discoverable record that prosecutors can subpoena, forensic examiners can recover, and a jury can hear read aloud at trial. What feels like a private confession may become the prosecution's most powerful evidence.

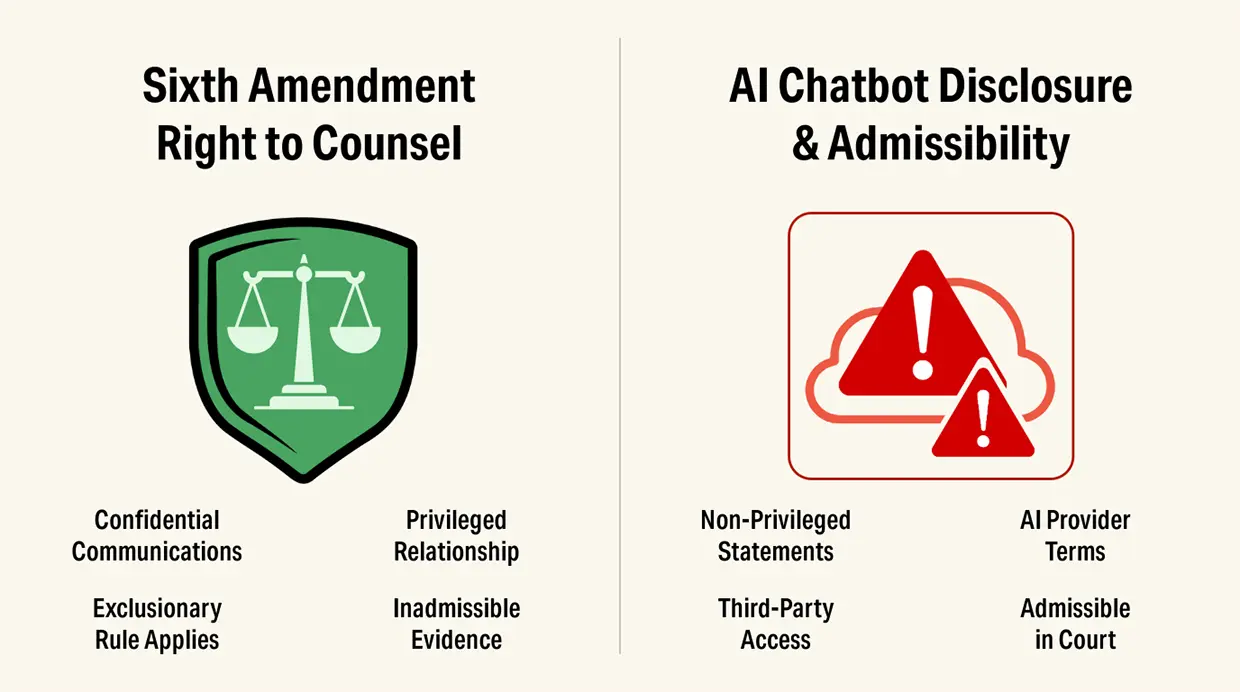

In United States v. Heppner (S.D.N.Y., Feb. 2026), the court made clear that communications with consumer AI tools fall outside the protections that normally shield legal strategy. Judge Rakoff reasoned that AI platforms are third parties because they are operated by independent companies that receive, process, and retain user inputs. This means any information shared is no longer confined to a confidential attorney-client relationship.

As a result, attorney-client privilege does not apply, since the communication is not between a client and a licensed attorney and is not maintained in confidence. The court further held that work product protection is waived when a user shares attorney communications, legal theories, or case strategy with an AI system. By voluntarily disclosing those materials to a third party, the user destroys the confidentiality required to preserve protection, rendering those communications potentially discoverable in litigation.

Why defendants confess to machines

If you are facing criminal charges, you may wonder why anyone would discuss their case with an AI chatbot. The answer is psychology, and it is the same reason false confessions happen in interrogation rooms.

When you are arrested or under investigation, you experience intense stress, fear, and isolation. Your attorney is not always available. The police have stopped questioning you for now, but your mind is racing. You want to understand what is happening, what the evidence means, and what your options are.

AI chatbots are designed to exploit this vulnerability. They are available 24/7. They respond instantly and are often free or cheap. They never judge you, never tell you to stop talking, and never remind you of your rights. They validate your feelings and provide answers that sound authoritative, even when they are wrong.

A 2025 survey found that 56% of AI users have sought legal advice from chatbots, and 67% mistakenly believed those conversations were protected by attorney-client privilege. For criminal defendants, the numbers are likely higher. When you are facing years in prison, you will grasp at any source of guidance.

The result is digital confession. Defendants tell AI things they would never tell a detective or even their attorney:

- Exactly where they were and what they were doing

- Who they were with and what those people said

- What they told the police versus what actually happened

- What evidence they know about and what they think it proves

- Their fears about weaknesses in their defense

- Their thoughts about fleeing, destroying evidence, or influencing witnesses

AI does not just answer questions. It creates an atmosphere of false intimacy that encourages disclosure of the very information that can destroy your defense.

AI chat logs are the new confession tapes

Prosecutors have always loved confession evidence. Juries believe it. It is direct, dramatic, and difficult to explain away. For decades, confessions came from interrogation rooms, recorded on audio or video tape.

AI chat logs are becoming the new confession tapes. In some ways, they are even more damaging.

An interrogation recording captures what you said to police in a high-pressure environment. Your attorney can argue you were coerced, exhausted, or confused. But an AI chat log captures what you said when you were alone, thinking clearly, and seeking help. It reads like a deliberate, considered statement of your version of events.

And unlike an interrogation, which police may or may not record, AI conversations are recorded. Every prompt, every response, every edit, every follow-up question is timestamped and stored. The prosecution gets a complete narrative of your thought process, your concerns, and your strategy.

If you tell the AI one version of events and tell the jury something different at trial, the prosecutor will impeach you with your own words. If you ask the AI "how to make this sound better," you have created evidence of consciousness of guilt. If you upload documents and ask for analysis, you may have waived privilege over the entire case file.

You are not just seeking help. You are creating a digital confession that will be played back at your trial.

The Sixth Amendment problem you did not know you had

The Sixth Amendment guarantees your right to counsel. But that right is fragile, and you can waive it without realizing what you have done.

Attorney-client privilege protects confidential communications between you and your lawyer for the purpose of obtaining legal advice. It requires three elements: a communication, between attorney and client, made in confidence. AI conversations fail the second element entirely.

When you type your questions into ChatGPT, you are not communicating with your attorney. You are communicating with OpenAI, a corporation that stores your data, potentially uses it to train models, and will produce it in response to a subpoena or other legal process. There is no confidentiality. There is no privilege.

The third-party doctrine makes this explicit. Courts have consistently held that information you voluntarily share with third parties, banks, phone companies, social media platforms, loses any reasonable expectation of privacy. AI providers are third parties. When you share information with them, you are waiving your privacy rights and creating a record that the government can access.

Something can feel like a confidential conversation and still be fully admissible against you.

How the prosecution gets your AI history

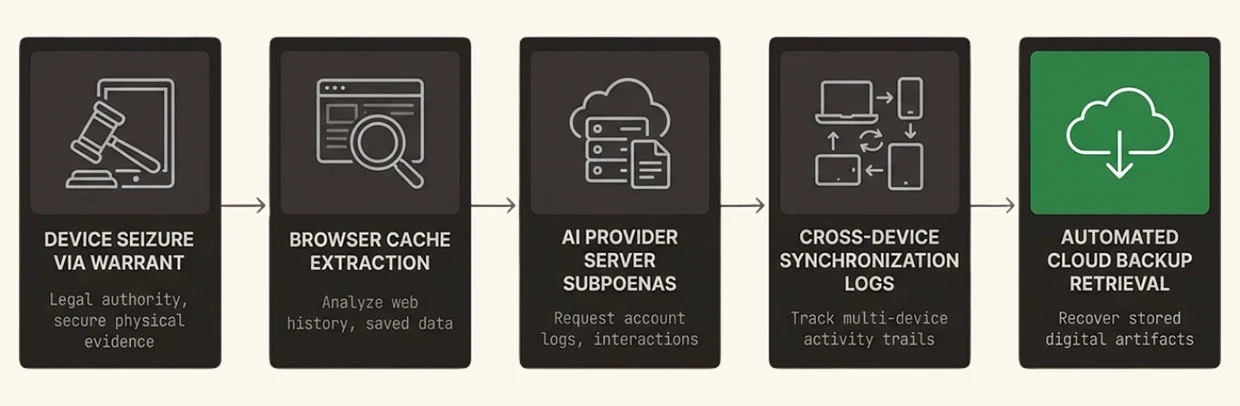

Once you are charged, the prosecution has multiple ways to obtain your AI conversations. Understanding these methods is essential to understanding the risk.

Search warrants for your devices. When police execute a search warrant on your phone, computer, or home, they seize every device that might contain evidence. Forensic examiners then extract browser history, cached data, and application data. Even if you deleted the AI app or cleared your browser history, fragments of your conversations may remain in cache, logs, or synced data.

Subpoenas and Search Warrants to AI providers. Prosecutors can subpoena or serve a Search Warrant on OpenAI, Anthropic, Google, and other AI providers for your account records. While the Stored Communications Act can create some procedural hurdles, these are easily defeated by Law Enforcement, and providers routinely comply with valid legal process. Your conversation history, timestamps, IP addresses, and device identifiers are all available.

Discovery from you. During the discovery process, the prosecution can request any documents, communications, or electronically stored information related to your case. If you used AI to discuss your case, those conversations are discoverable. Failure to preserve and produce them can result in sanctions and adverse inference instructions.

Co-defendant cooperation. If you discussed your case with AI and mentioned co-defendants, those co-defendants may be subpoenaed to testify about what you told them. The AI conversation becomes evidence of conspiracy, knowledge, or intent and is potentially further authenticated by people mentioned to AI.

Jail calls and monitored communications. If you are in custody, your phone calls and electronic communications are monitored. Using AI through a jail tablet or smuggled phone creates an instant record that investigators review daily. We know and have seen it happen.

The work product catastrophe

Work product protection is one of the most important tools your defense attorney has. It allows them to develop strategy, investigate theories, and prepare your defense without worrying that their mental impressions will be disclosed to the prosecution.

You can destroy this protection in seconds.

Here is how it happens. Your attorney discusses suppressing evidence. You are not sure about one of the ideas outlined by your attorney, so you turn to ChatGPT, and ask: "Will this work?" You have just disclosed your attorney's work product to a third party. The protection is waived.

Or your attorney explains their theory of the case during a jail visit. Later that night, you summarize that theory on a phone that is later confiscated by Law Enforcement, in which you asked Claude if it made sense. You have just waived work product protection over your attorney's strategy.

Or you upload discovery documents, the police reports, witness statements, forensic reports, and ask the AI to analyze them. You have now disclosed the entire case file to a corporation that can be subpoenaed by the prosecution.

The moment you share attorney materials with AI, they stop being protected. The prosecution can argue they are now discoverable. Your attorney's strategy, their assessment of weaknesses in the government's case, their plans for cross-examination, all of it may now be exposed.

From a digital forensics perspective: how we recover what you thought was gone

As forensic examiners who work on criminal defense cases, we see defendants make the same mistake repeatedly. They believe that deleting an AI conversation makes it disappear. They are wrong.

When you use AI tools, you create multiple layers of digital evidence that persist long after you delete the chat:

Browser artifacts. Your web browser stores history, cookies, session data, and cache. Even if you delete the conversation from your AI account, forensic imaging of your device may recover fragments from browser storage. We have recovered complete chat logs from application data files that defendants thought were gone.

Account history. AI providers maintain server-side logs of every conversation, timestamp, IP address, and device identifier. These records persist even after you "delete" a chat. In the New York Times v. OpenAI litigation, a federal court ordered OpenAI to produce 20 million user conversations, including deleted chats that had been preserved under litigation hold.

Synced devices. If you access AI from multiple devices, phone, tablet, computer, your conversations sync across all of them. Deleting from one device does not delete from others. We regularly find complete conversation histories on secondary devices that defendants forgot about.

Cloud backups. Your phone and computer back up to cloud services automatically. Those backups contain application data, including AI conversation fragments. Even if you wipe your device, the backups remain.

Provider retention. OpenAI's privacy policy states they retain data "for legal, tax or accounting purposes" and "to address fraud and abuse" even after deletion requests. They are preserving your data against the possibility of legal process. That legal process may be a subpoena in your criminal case.

Digital evidence does not disappear. It persists, correlates, and resurfaces at the worst possible moment for defendants.

How AI conversations become evidence at trial

Once prosecutors obtain your AI conversations, they will use them at trial. Understanding how this happens helps you understand why you must never create this evidence in the first place.

"Do not put anything in an AI chat you do not want to hear read aloud at your trial."

Impeachment. If your trial testimony differs from what you told the AI, the prosecutor will impeach you with your own words. The jury will hear you say one thing to the chatbot and something different in court. They will conclude you are lying.

Consciousness of guilt. If you asked the AI how to "explain away" evidence, "make this sound better," or "get the charges dropped," the prosecutor will argue this shows consciousness of guilt. Innocent people do not need to craft stories.

Admissions against interest. Anything you told the AI that is against your legal interest is admissible as a statement against interest. This includes admissions about your whereabouts, your actions, your knowledge, and your intent.

Conspiracy evidence. If you discussed co-defendants with the AI, those conversations become evidence of conspiracy. The prosecution will argue that your discussions with AI show coordination, knowledge, and shared criminal purpose.

Prior inconsistent statements. If you gave different versions of events to the AI at different times, the prosecution will use these inconsistencies to attack your credibility and argue you are adapting your story to fit the evidence.

Sentencing enhancement. At sentencing, the prosecution will use your AI conversations to argue for enhanced penalties. They will claim your use of AI to research your case shows sophistication, planning, and lack of remorse.

Miranda warnings do not apply to chatbots

When police interrogate you in a custodial interview, they must read you your Miranda rights. You have the right to remain silent. You have the right to an attorney. Anything you say can be used against you.

AI chatbots provide no such warnings. They do not tell you that your conversation is being recorded. They do not tell you that your data will be stored, analyzed, and potentially produced to law enforcement. They do not tell you that you are waiving your rights by using their service.

The terms of service that no one reads contain buried disclosures about data retention, legal compliance, and cooperation with law enforcement. But there is no warning when you open the chat interface. There is no reminder that you are creating evidence.

The result is that defendants waive their rights without knowing it. They create self-incriminating evidence without the protections that would apply in a police interrogation.

When AI is safe for defendants, and when it is deadly

Please understand this is not Legal Advice; however, this does not mean you should never use AI. It means you must understand the boundaries that separate safe use from catastrophic mistakes.

Safe uses:

- General education about the criminal justice system and court procedures

- Definitions of legal terms and concepts

- Public information about court rules and filing deadlines

- Generic explanations of constitutional rights

- Hypothetical scenarios that do not reference your actual case

Deadly uses:

- Describing the specific facts of your alleged offense

- Discussing what you told police versus what actually happened

- Uploading discovery documents, police reports, or witness statements

- Sharing or paraphrasing advice from your attorney

- Discussing defense strategy or trial plans

- Asking how to "explain" evidence or make your story more believable

- Researching penalties, sentencing guidelines, or plea options for your specific charges

The test is simple: if it relates to your case, your charges, your evidence, or your defense, it does not belong in AI.

The hidden danger: AI gives wrong advice that sounds right

Even if you ignore all the privilege and evidence risks, using AI for criminal defense advice has another fatal flaw. AI does not know your case. It cannot assess your situation, evaluate the evidence against you, or understand the specific strategies that work in your jurisdiction.

AI generates responses based on patterns in its training data. It has no access to:

- The specific evidence the prosecution has against you

- The credibility of witnesses who will testify

- The tendencies of the judge assigned to your case

- The policies of the prosecutor handling your charges

- The unwritten rules and practices of your local courts

It may give you confidently wrong advice that sounds authoritative but misses critical details. It may suggest defenses that do not apply to your charges. It may misunderstand the elements of the crime you are accused of committing.

You may waive your privilege, create devastating evidence, and still get advice that hurts your case. That is the worst of all possible outcomes.

Protecting your rights: talk to your lawyer, not a chatbot

The solution is simple but requires discipline. When you have questions about your criminal case, talk to your criminal defense attorney. That is what the Sixth Amendment protects.

Your attorney can:

- Evaluate the specific evidence and charges against you

- Provide advice tailored to your jurisdiction, judge, and prosecutor

- Protect your communications from discovery by the prosecution

- Develop strategy without creating a record that can be used against you

- Ensure you do not accidentally waive your rights or create damaging evidence

The protections you need already exist. But they only work if you use them correctly.

If you are concerned that you may have already created evidence through AI use, tell your attorney immediately. Do not hide it. Do not hope it goes away. Early intervention by a forensic expert can assess what evidence exists, how it can be challenged, and how to minimize the damage.

The Black Dog Forensics perspective

At Black Dog Forensics, we work with criminal defense attorneys to understand and challenge digital evidence. We know how prosecutors obtain AI conversations, how they use them at trial, and how to defend against this new category of evidence.

We understand where digital evidence lives, how it is recovered forensically, and how it can be authenticated, challenged, or excluded. Whether you need to assess what evidence the prosecution may have recovered, challenge the admissibility of AI chat logs, or develop a strategy for dealing with digital confessions, we help defense teams understand these risks before it is too late.

What feels like a private conversation today can become the prosecution's key evidence tomorrow. We help defendants and their attorneys understand that before it destroys their case.

346-200-6097

346-200-6097