The Hidden Legal Risks of Using AI for Sensitive Matters

It is 11 PM. You are staring at a demand letter from a former business partner, and your attorney's office closed hours ago. The dispute is keeping you awake, so you open ChatGPT and start typing. You paste in the letter, add details about what "really happened," and ask for advice. The response comes instantly. It feels helpful, confidential, and safe.

But here is what most people do not realize: that conversation just created evidence. Not in some abstract sense. Real, discoverable, potentially damaging evidence that opposing counsel can subpoena, forensic examiners can recover, and a jury can see.

What feels like a private conversation may become a permanent, discoverable record.

Why people overshare with AI

Understanding the legal risk raises an obvious question: if AI conversations are discoverable, why do so many people share sensitive information with them?

The answer lies in psychology. AI chatbots are designed to feel safe, nonjudgmental, and helpful. They are available 24/7 without hourly rates. They respond instantly, validate your concerns, and never tell you that your question is inappropriate. In moments of stress, when you are worried about a legal dispute and cannot reach your attorney, AI feels like a confidential sounding board.

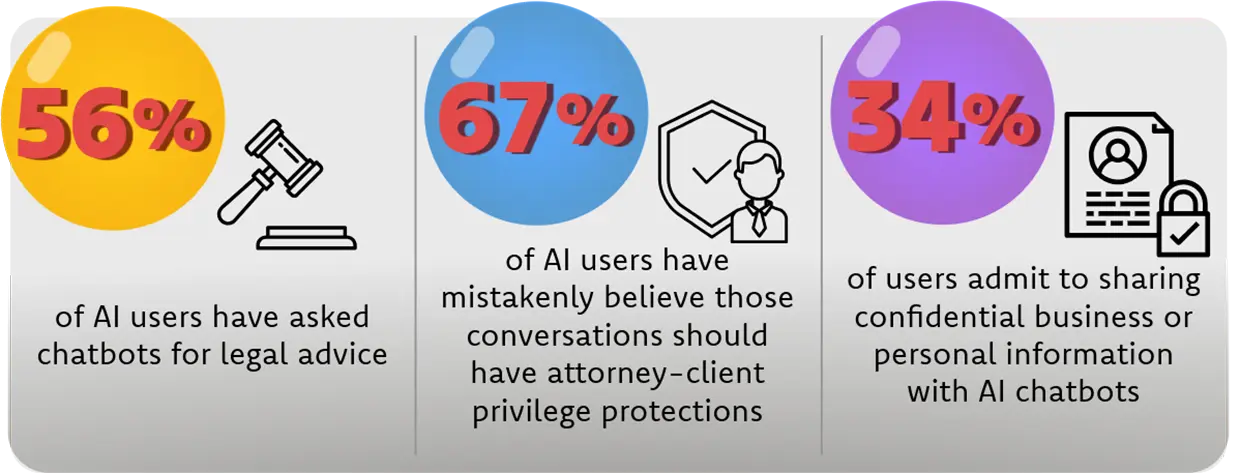

A 2025 survey by Kolmogorov Law found that 56% of AI users have asked chatbots for legal advice, and 67% mistakenly believe those conversations should have attorney-client privilege protections. Even more concerning, 34% of users admit to sharing confidential business or personal information with AI chatbots.

The result is over-disclosure. People tell AI things they would never put in an email:

- Timelines of events with details that contradict their legal position

- Internal communications about the dispute

- Financial concerns and settlement calculations

- Strategy thoughts and litigation plans

- "What really happened" narratives that include admissions

- What was deleted or altered before litigation began

AI does not just answer questions. It encourages disclosure by creating an atmosphere of false privacy.

AI chat logs are the new digital evidence

To understand the risk, compare AI conversations to traditional forms of evidence. Emails, text messages, and financial records have long been standard fare in litigation. Attorneys routinely subpoena them, forensic experts authenticate them, and juries weigh them.

AI chat logs are joining that list. In fact, they may be more valuable than traditional evidence because of their structure. AI conversations are timestamped, sequential narratives that reveal strategy, intent, concerns, and inconsistencies.

When you type a question into ChatGPT at 2 AM about how to respond to a demand letter, you are creating a record of:

- What you were thinking about the case

- What worried you enough to keep you awake

- What facts you emphasized or omitted

- What strategy you were considering

If your later deposition testimony differs from what you told the AI, opposing counsel will use that chat log to impeach your credibility. If you asked the AI to "make this sound less serious," you just created evidence of intent to minimize. If you uploaded documents and asked for analysis, you may have waived privilege over those documents.

You are not just using a tool. You are documenting your case in real time, fully exposed.

Privacy vs. privilege: a critical mistake

Many people conflate privacy with privilege. They assume that because an AI conversation feels private, it must be legally protected. This is a critical mistake.

Attorney-client privilege typically requires three elements:

- A communication between attorney and client

- Made in confidence

- For the purpose of seeking or providing legal advice

AI conversations fail the first element. You are communicating with a corporation, not an attorney. Even if you are discussing legal matters, there is no privilege because there is no lawyer on the other end.

The third-party doctrine makes this explicit. Courts have long held that information you voluntarily share with third parties (banks, phone companies, social media platforms) loses legal protection. AI providers are third parties. When you share information with OpenAI, Anthropic, or Google, you are voluntarily disclosing it to a corporation that can be compelled to produce it in litigation.

Something can feel private and still be fully discoverable.

The work product trap

The most dangerous mistake involves work product. Work product protection covers materials prepared by or for an attorney in anticipation of litigation. It is broader than the attorney-client privilege and protects an attorney's mental impressions, strategies, and case theories.

Here is how the trap works. Your attorney drafts a response to a demand letter and sends it to you for review. You are not sure about one section, so you copy the draft, paste it into ChatGPT, and ask: "Is this language too aggressive?" You have just shared privileged work product with a third party. The protection is waived.

The moment you share attorney work product with AI, based on your jurisdiction, it could possibly stop being protected. Opposing counsel can now argue that the work product is discoverable because you disclosed it to a third party. The strategy your attorney developed, the theories they considered, the approach they recommended, all of it may now be exposed.

This applies to more than just draft documents. If you summarize your attorney's advice for the AI, you are disclosing privileged communications. If you ask the AI to analyze your attorney's strategy, you are waiving work product protection. If you upload case documents and ask for help understanding them, you may be compromising the entire case.

From a digital forensics perspective

As digital forensic examiners, we see how AI conversations become evidence in practice. The technical reality is sobering: if it exists, then it likely can be found.

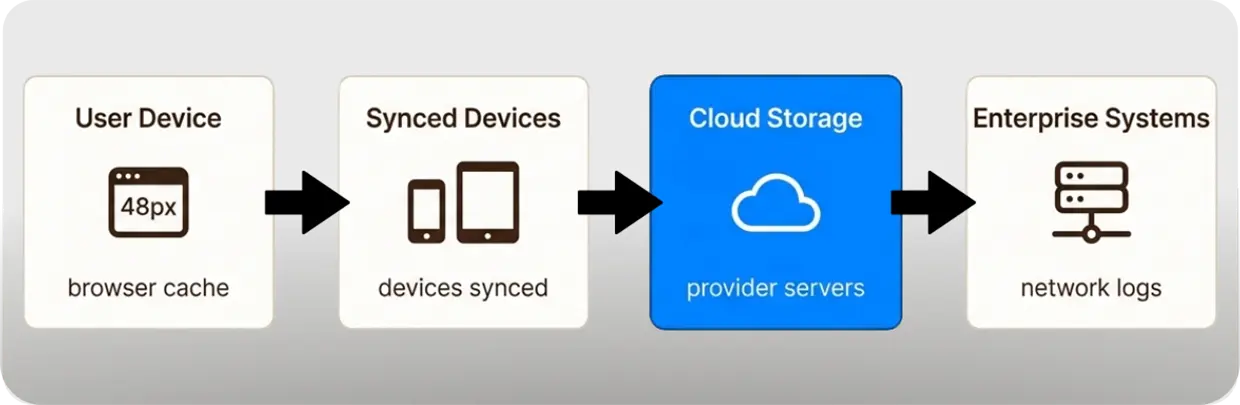

When you use AI tools, you create multiple layers of recoverable data:

- Browser artifacts. Your web browser stores history, cache, cookies, and session data. Even if you delete the conversation from your AI account, forensic analysis of your device may recover fragments from browser storage.

- Account history. AI providers maintain server-side logs of your conversations, timestamps, IP addresses, and device identifiers. These records persist even after you "delete" a chat. In the New York Times v. OpenAI litigation, a federal court ordered OpenAI to produce 20 million user conversations, including deleted chats that had been preserved under a legal hold.

- Synced devices. If you access AI tools from multiple devices (phone, laptop, tablet), your conversations sync across all of them. Deleting from one device does not delete from others, and forensic imaging of any device may reveal the complete conversation history.

- Cloud data. AI providers store your data in cloud infrastructure. Even after user-level deletion, backups and logs may retain the information for extended periods. OpenAI's privacy policy states that they retain data "for legal, tax or accounting purposes" and "to address fraud and abuse" even after deletion requests.

- Enterprise logs. In corporate environments, network monitoring, DLP systems, and admin controls may log AI usage independently of the provider's records.

In litigation, forensic collection targets these sources. We reconstruct timelines, correlate AI usage with other digital activities, and authenticate chat logs for admission as evidence. Digital evidence does not disappear. It persists, correlates, and resurfaces.

How this becomes evidence in litigation

The discovery process in civil litigation is designed to uncover relevant evidence. Attorneys use several mechanisms to obtain AI conversations:

- Requests for Production. Opposing counsel can request documents and electronically stored information (ESI) related to the dispute. This explicitly includes AI chat logs, prompts, outputs, and account history.

- Subpoenas to parties. If you are a party to litigation, you can be compelled to produce your AI conversation history. Failure to preserve these records after litigation is anticipated can result in sanctions for spoliation.

- Third-party subpoenas. In theory, attorneys could subpoena AI providers directly. However, the Stored Communications Act (18 U.S.C. § 2701) creates legal obstacles to obtaining content from providers without user consent or a court order. The cleaner route is obtaining data from the party who used the AI.

What are opposing counsel looking for? Notes, drafts, communications, and materials used to prepare claims or defenses. AI logs fall directly into these categories. If you used AI to help prepare your position, those conversations may be requested.

The old rule vs. the new rule

Attorneys have long advised clients: do not put anything in writing that you do not want to see in court. That advice needs updating for the AI age.

Old Rule: Do not put anything in writing you do not want to see in court.

New Rule: Do not put anything in an AI chat you do not want to see in court.

The risk is actually greater with AI than with traditional communications. Emails and texts are usually between known parties with some context. AI conversations are timestamped narratives that may include admissions, strategy discussions, and requests to manipulate or reframe facts. They are discoverable, durable, and potentially devastating.

When AI is safe and when it is not

This does not mean you should never use AI. It means you should understand the boundaries. Here is a practical framework:

Safe uses:

- General education about legal concepts and procedures

- Definitions of legal terms and processes

- Public information about court rules and filing requirements

- Generic checklists and templates not specific to your case

- Hypothetical scenarios that do not reference your actual dispute

Unsafe uses:

- Describing the specific facts of your dispute

- Uploading documents related to your case

- Sharing or paraphrasing advice from your attorney

- Discussing case strategy or settlement positions

- Asking the AI to draft, edit, or review documents for your case

- Using AI for a "second opinion" on your attorney's advice

The test is simple: if it is specific to your case, it does not belong in AI.

The hidden risk AI does not know your case

Even if you ignore the privilege risks, using AI for legal advice has another problem. AI does not know your case. It has no access to:

- The full set of evidence and documents

- The judge's prior rulings and tendencies

- Opposing counsel's style and strategies

- Local rules and unwritten practices

- The specific facts that make your case unique

AI generates responses based on patterns in its training data. It cannot assess credibility, evaluate evidence, or understand the nuances of your jurisdiction. It may give you confidently wrong advice that sounds right but misses critical details.

You may waive privilege and still get the wrong answer. That is the worst of both worlds.

Protecting your legal interests in the AI age

The solution is straightforward. When you have a legal question, ask your attorney. That is what attorney-client privilege protects. Your lawyer can:

- Evaluate your specific situation with full context

- Provide advice tailored to your jurisdiction and judge

- Protect your communications from discovery

- Develop strategy without creating a discoverable record

The protection you need already exists. You just have to use it correctly.

If you are concerned that you may have already created evidence through AI use, consult a forensic expert early. Preserving and analyzing digital evidence before it is lost or altered can make a significant difference in your case strategy.

The Black Dog Forensics perspective

At Black Dog Forensics, we understand how digital evidence shapes litigation outcomes. AI is creating a new category of evidence that many attorneys and clients do not yet fully appreciate.

We know where digital evidence lives, how it is recovered, and how it is used in litigation. Whether you need to preserve evidence, analyze AI usage patterns, or understand what opposing counsel may have recovered, we help clients understand these risks before it is too late.

What feels like a conversation today can become evidence tomorrow. We help clients understand that before it is too late.

"Do not put anything in an AI chat you do not want to see in a courtroom."

How it happens:

A business owner facing a contract dispute copies their attorney's draft response and pastes it into ChatGPT asking, "Is this language too aggressive?" They have now shared privileged work product with a third party, potentially waiving protection and creating discoverable evidence of their litigation strategy.

346-200-6097

346-200-6097